Source: ForeignAffairs4

Source: The Conversation – USA (2) – By John Weigand, Professor Emeritus of Architecture and Interior Design, Miami University

Colleges and universities are struggling to stay afloat.

The reasons are numerous: declining numbers of college-age students in much of the country, rising tuition at public institutions as state funding shrinks, and a growing skepticism about the value of a college degree.

Pressure is mounting to cut costs by reducing the time it takes to earn a degree from four years to three.

Students, parents and legislators increasingly prioritize return on investment and degrees that are more likely to lead to gainful employment. This has boosted enrollment in professional programs while reducing interest in traditional liberal arts and humanities majors, creating a supply-demand imbalance.

The result has been increasing financial pressure and an unprecedented number of closures and mergers, to date mostly among smaller liberal arts colleges.

To survive, institutions are scrambling to align curriculum with market demand. And they’re defaulting to the traditional college major to do so.

The college major, developed and delivered by disciplinary experts within siloed departments, continues to be the primary benchmark for academic quality and institutional performance.

This structure likely works well for professional majors governed by accreditation or licensure, or more tightly aligned with employment. But in today’s evolving landscape, reliance on the discipline-specific major may not always serve students or institutions well.

As a professor emeritus and former college administrator and dean, I argue that the college major may no longer be able to keep up with the combinations of skills that cross multiple academic disciplines and career readiness skills demanded by employers, or the flexibility students need to best position themselves for the workplace.

Students want flexibility

MoMo Productions/Digital Vision via Getty Images

I see students arrive on campus each year with different interests, passions and talents – eager to stitch them into meaningful lives and careers.

A more flexible curriculum is linked to student success, and students now consult AI tools such as ChatGPT to figure out course combinations that best position them for their future. They want flexibility, choice and time to redirect their studies if needed.

And yet, the moment students arrive on campus – even before they apply – they’re asked to declare a major from a list of predetermined and prescribed choices. The major, coupled with general education and other college requirements, creates an academic track that is anything but flexible.

Not surprisingly, around 80% of college students switch their majors at least once, suggesting that more flexible degree requirements would allow students to explore and combine diverse areas of interest. And the number of careers, let alone jobs, that college graduates are expected to have will only increase as technological change becomes more disruptive.

As institutions face mounting pressures to attract students and balance budgets, and the college major remains the principal metric for doing so, the curriculum may be less flexible now than ever.

How schools are responding

Fuse/Corbia via Getty Images

In response to market pressures, colleges are adding new high-demand majors at a record pace. Between 2002 and 2022, the number of degree programs nationwide increased by nearly 23,000, or 40%, while enrollment grew only 8%. Some of these majors, such as cybersecurity, fashion business or entertainment design, arguably connect disciplines rather than stand out as distinct. Thus, these new majors siphon enrollment from lower-demand programs within the institution and compete with similar new majors at competitor schools.

At the same time, traditional arts and humanities majors are adding professional courses to attract students and improve employability. Yet, this adds credit hours to the degree while often duplicating content already available in other departments.

Importantly, while new programs are added, few are removed. The challenge lies in faculty tenure and governance, along with a traditional understanding that faculty set the curriculum as disciplinary experts. This makes it difficult to close or revise low-demand majors and shift resources to growth areas.

The result is a proliferation of under-enrolled programs, canceled courses and stretched resources – leading to reduced program quality and declining faculty morale.

Ironically, under the pressure of declining demand, there can be perverse incentives to grow credit hours required in a major or in general education requirements as a way of garnering more resources or adding courses aligned with faculty interests. All of which continues to expand the curriculum and stress available resources.

Universities are also wrestling with the idea of liberal education and how to package the general education requirement.

Although liberal education is increasingly under fire, employers and students still value it.

Students’ career readiness skills – their ability to think critically and creatively, to collaborate effectively and to communicate well – remain strong predictors of future success in the workplace and in life.

Reenvisioning the college major

Assuming the requirement for students to complete a major in order to earn a degree, colleges can also allow students to bundle smaller modules – such as variable-credit minors, certificates or course sequences – into a customizable, modular major.

This lets students, guided by advisers, assemble a degree that fits their interests and goals while drawing from multiple disciplines. A few project-based courses can tie everything together and provide context.

Such a model wouldn’t undermine existing majors where demand is strong. For others, where demand for the major is declining, a flexible structure would strengthen enrollment, preserve faculty expertise rather than eliminate it, attract a growing number of nontraditional students who bring to campus previously earned credentials, and address the financial bottom line by rightsizing curriculum in alignment with student demand.

One critique of such a flexible major is that it lacks depth of study, but it is precisely the combination of curricular content that gives it depth. Another criticism is that it can’t be effectively marketed to an employer. But a customized major can be clearly named and explained to employers to highlight students’ unique skill sets.

Further, as students increasingly try to fit cocurricular experiences – such as study abroad, internships, undergraduate research or organizational leadership – into their course of study, these can also be approved as modules in a flexible curriculum.

It’s worth noting that while several schools offer interdisciplinary studies majors, these are often overprescribed or don’t grant students access to in-demand courses. For a flexible-degree model to succeed, course sections would need to be available and added or deleted in response to student demand.

Several schools also now offer microcredentials– skill-based courses or course modules that increasingly include courses in the liberal arts. But these typically need to be completed in addition to requirements of the major.

We take the college major for granted.

Yet it’s worth noting that the major is a relatively recent invention.

Before the 20th century, students followed a broad liberal arts curriculum designed to create well-rounded, globally minded citizens. The major emerged as a response to an evolving workforce that prioritized specialized knowledge. But times change – and so can the model.

![]()

John Weigand does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

– ref. Why the traditional college major may be holding students back in a rapidly changing job market – https://theconversation.com/why-the-traditional-college-major-may-be-holding-students-back-in-a-rapidly-changing-job-market-258383

to

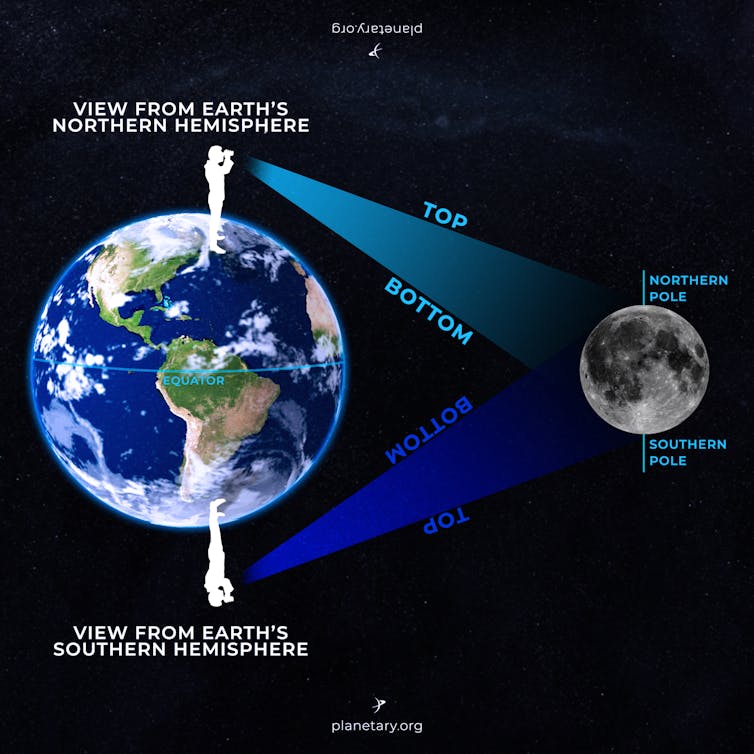

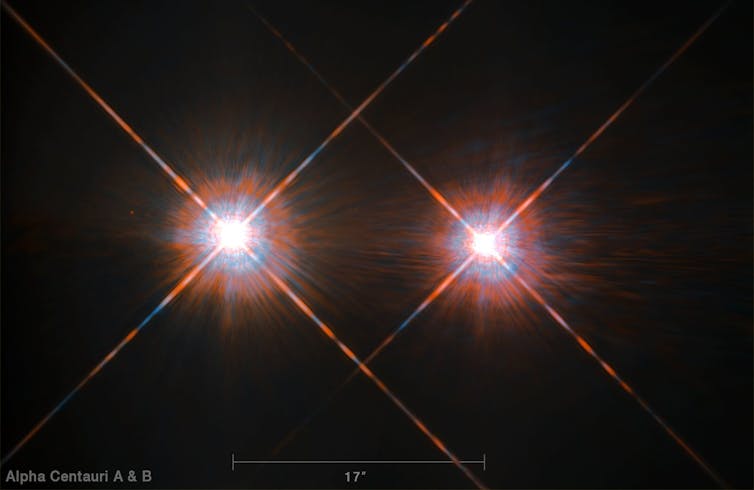

to  . All the craters that I was used to seeing on the top of the Moon from Wisconsin were now on the bottom – because I was looking at the Moon from the Southern Hemisphere instead of the Northern Hemisphere.

. All the craters that I was used to seeing on the top of the Moon from Wisconsin were now on the bottom – because I was looking at the Moon from the Southern Hemisphere instead of the Northern Hemisphere.