Source: ForeignAffairs4

Source: The Conversation – USA (2) – By Masako Toki, Senior Education Project Manager and Research Associate, Nonproliferation Education Program, Middlebury

Eighty years ago, in August 1945, the cities of Hiroshima and Nagasaki were incinerated by the first and only use of nuclear weapons in war. By the end of that year, approximately 140,000 people had died in Hiroshima and 74,000 in Nagasaki.

Those who survived – known as Hibakusha – have carried their suffering as living testimony to the catastrophic humanitarian consequences of nuclear war, with one key wish: that no one else will suffer as they have.

Now, in 2025, as the world marks 80 years of remembrance since those bombings, the voices of the Hibakusha offer not only memory, but also moral clarity in an age of growing peril.

As someone who focuses on nuclear disarmament and has heard Hibakusha testimonies in my native Japanese language, I have been enthusiastically promoting disarmament education grounded in their voices and experience. I believe their message is more vital than ever at a time of rising nuclear risk. Nuclear threats have reemerged in global discourse, breaking long-standing taboos against even talking about their use. From Russia and Europe to the Middle East and East Asia, the possibility of nuclear escalation is no longer unthinkable.

Universal History Archive/Universal Images Group via Getty Images

Japan’s deepening reliance on deterrence

Ironically, increasing nuclear threats are contributing to further reliance on nuclear deterrence, the strategy of preventing attack by threatening nuclear retaliation, rather than renewed efforts toward nuclear disarmament, which seeks to eliminate nuclear weapons entirely.

Nowhere is this contradiction more visible than in Japan. While the Hibakusha have long stood as global advocates for nuclear abolition, Japan’s approach to national security has placed growing emphasis on the role of nuclear deterrence.

In the face of regional threats, the Japanese government has strengthened its dependence on U.S. nuclear protection – even as the survivors of Hiroshima and Nagasaki warn not only of the dangers of relying on nuclear weapons for security, but also of the profound moral failure such reliance represents.

Listen to Hibakusha voices

For eight decades, the Hibakusha have shared their stories to prevent future tragedy – not to assign blame, but to awaken conscience and spark action.

Masako Wada, assistant secretary general of Nihon Hidankyo, a nationwide organization of atomic bomb survivors working for the abolition of nuclear weapons, was just under 2 years old when the atomic bomb was dropped on Nagasaki. Her home, 1.8 miles from the blast center, was shielded by surrounding mountains, sparing her from burns or injury. Though too young to remember the bombing herself, she grew up hearing about it from her mother and grandfather, who witnessed the devastation firsthand.

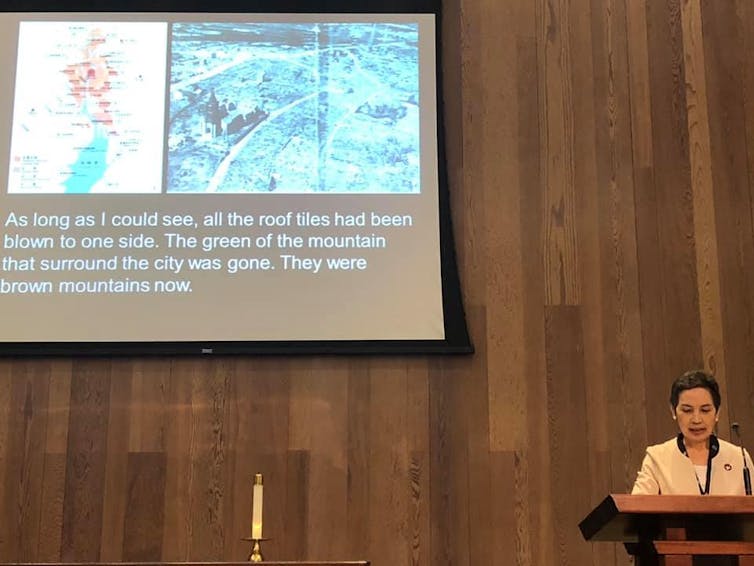

In July 2025 at a nuclear risk reduction conference in Chicago, Wada told the attendees:

“The risk of using nuclear weapons has never been higher than it is now. … Nuclear deterrence, which intimidates other countries by possessing nuclear weapons, cannot save humanity.”

In a piece she wrote for Arms Control Today that same month, she further implored:

“The Hibakusha are the ones who know the humanitarian consequences of the use of nuclear weapons. We will continue to convey that reality. Please listen to us, please empathize with us. Find out what you can do and take action together with us. Nuclear weapons cannot coexist with human beings. They were created by humans; let us assume the responsibility to abolish them with the wisdom of public conscience.”

This plea – rooted in lived experience and moral responsibility – was recognized globally when the 2024 Nobel Peace Prize was awarded to Nihon Hidankyo. The award honored not only the survivors’ suffering, but their decades-long commitment to preventing future use of nuclear weapons through education, activism and testimony.

Masako Toki, CC BY-ND

A dwindling number

But time is running out. Most Hibakusha were children or young adults in 1945. Today, their average age is over 86. In March 2025, the number of officially recognized Hibakusha fell below 100,000, according to Japan’s Ministry of Health.

As Terumi Tanaka, a Hiroshima survivor and longtime leader of Nihon Hidankyo, said at the Nobel Peace Prize ceremony:

“Ten years from now, there may only be a handful of us able to give testimony as firsthand survivors. From now on, I hope that the next generation will find ways to build on our efforts and develop the movement even further.”

The role of empathy in disarmament education

Empathy is not a luxury in disarmament education – it is a necessity. Without it, nuclear weapons remain abstract. With it, they become personal, real and morally unacceptable.

That’s why disarmament education begins with human stories. The Hibakusha testimonies illuminate not only the physical destruction caused by nuclear weapons, but also the long-term trauma, discrimination and intergenerational pain that follow. They remind us that nuclear policy is not just a matter of strategy – it is a question of human survival. Nuclear weapons are the only weapons ever created with the power to annihilate all of humanity – and that makes disarmament not just a political issue, but a moral imperative.

Yet opportunities for young people to learn about nuclear risks, or hear from the Hibakusha directly, are extremely limited. In most countries, these issues are absent from school and university classrooms. This lack of education feeds ignorance and, in turn, complacency – allowing the flawed logic of deterrence to remain unchallenged.

Disarmament education that puts empathy and ethics at its center, along with survivors’ voices, can empower the next generation not only with knowledge, but with moral strength to choose their path.

Masako Toki, CC BY-ND

From remembrance to responsibility

Commemorating 80 years since the atomic bombings of Hiroshima and Nagasaki is not about history alone. It is about the future. It is about what people choose to remember – and what people choose to do with that memory.

The Hibakusha have never sought revenge. Their message is clear: This can happen again. But it doesn’t have to.

The Hibakusha’s journey shows that human beings are not destined to remain divided, nor are they doomed to repeat cycles of destruction. In the face of unimaginable loss, many Hibakusha chose not to dwell on anger or seek retribution, but instead to speak out for the good of all humanity. Their activism has been marked not by bitterness, but by an unwavering commitment to peace, empathy and the prevention of future suffering. Rather than directing their pain toward blame, they have transformed it into a powerful appeal to conscience and global solidarity. Their concern has never been only for Japan – but for the future of the entire human race.

That moral clarity, grounded in lived experience, remains profoundly instructive. In a world increasingly filled with conflict and fear, I believe there is much to learn from the Hibakusha. Their testimony is not just a warning – it is a guide.

I try to listen, and urge others, as well, to truly listen to what they have to say. I seek the company of people who also refuse complacency, question the legitimacy of nuclear deterrence, and work for a future where human dignity, not mutual destruction, defines human security.

![]()

Masako Toki does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

– ref. Survivors’ voices 80 years after Hiroshima and Nagasaki sound a warning and a call to action – https://theconversation.com/survivors-voices-80-years-after-hiroshima-and-nagasaki-sound-a-warning-and-a-call-to-action-262174